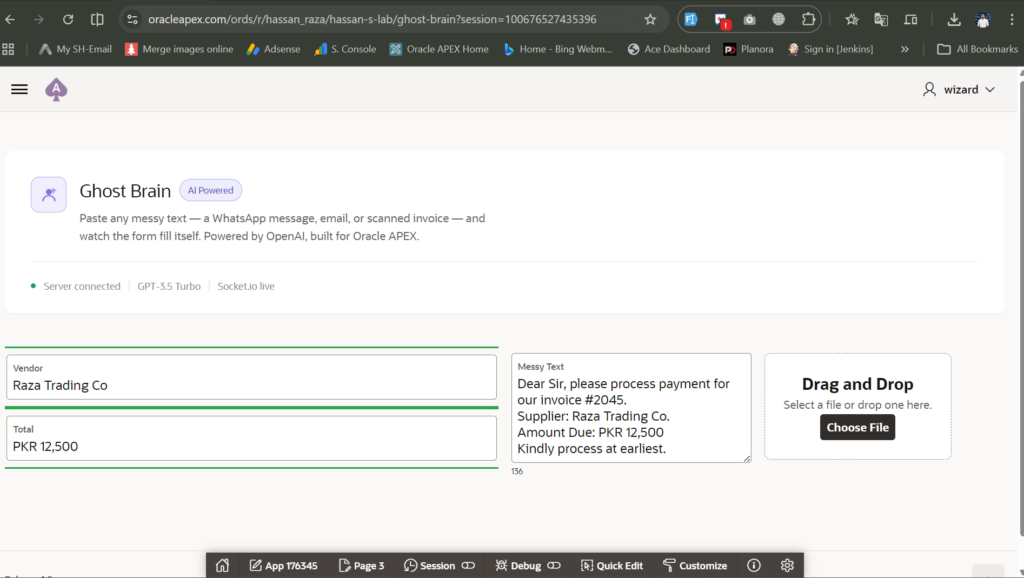

Your client sends a WhatsApp message in broken Urdu. It contains an invoice total and a vendor name buried inside three lines of noise. Your Oracle APEX form is waiting. PL/SQL is staring at you, completely helpless.

That’s the problem I solved this week.

I built what I call the Ghost Brain – a Node.js server that sits between messy human input and a clean Oracle APEX form. It reads chaos. It returns structure. And when you show it to a client, this AI-powered Oracle APEX form filling solution makes the form fill itself while they watch.

This is a complete build-in-public breakdown of how it works, what went wrong, and exactly where you can use this in production.

What Is the Ghost Brain and Why Does It Exist?

The Problem PL/SQL Simply Cannot Solve

PL/SQL is exceptional at processing structured data. Give it a clean number, a formatted date, or a known string pattern and it performs. Fast. Reliable.

But PL/SQL cannot read human language.

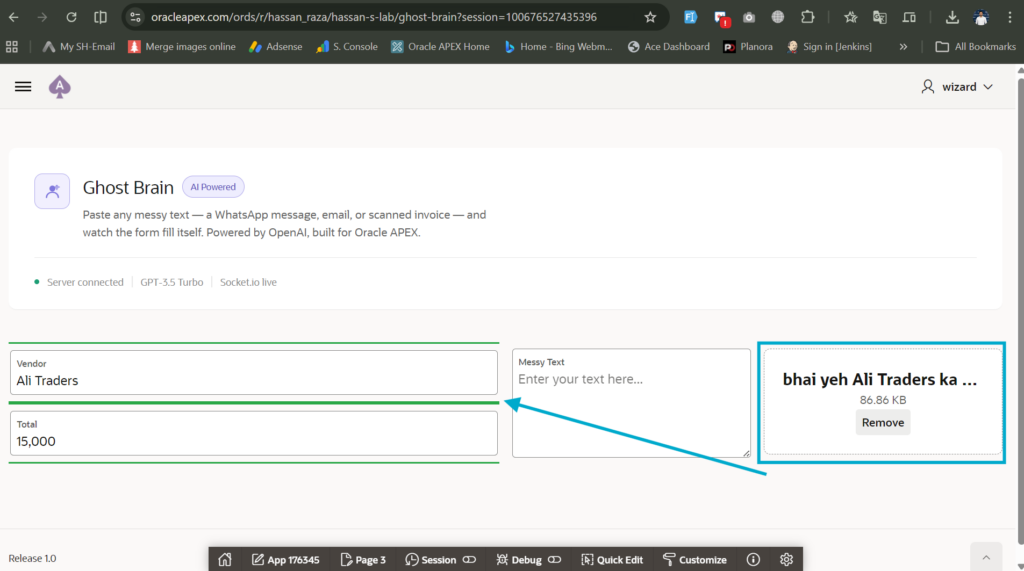

It cannot look at “bhai yeh Ali Traders ka bill hai, total fifteen thousand rupees” and extract VENDOR = Ali Traders and TOTAL = 15000. That requires language understanding. That requires AI.

This is not a criticism of PL/SQL. It is just not the right tool for this job.

Why AI Needs to Live Outside APEX (Not Inside It)

Oracle APEX’s native AI integrations connect to OpenAI and OCI Generative AI through REST endpoints. They work well for generating SQL, building app blueprints, and assisting developers. APEX 24.2 even added a new AI Dynamic Action for end users.

But none of those handle real-time, unstructured text input flowing into a form mid-session.

For that, you need a persistent server. One that stays connected to your APEX page via WebSocket and responds the moment a user drops text or uploads a file.

That is what the Ghost Brain is.

The 3-Piece Stack

The architecture is straightforward. Three components.

Node.js runs the server. Socket.io keeps a live, real-time bidirectional connection between your APEX page and the server. OpenAI GPT-3.5-turbo reads the messy text and returns clean JSON. APEX receives the JSON and fills the form fields by name.

This is a proven pattern for real-time Oracle integrations that developers have used since 2017. The AI layer is new. The plumbing is not.

How to Build the Ghost Brain Server From Scratch

Setting Up Node.js and Your Project Folder

Install Node.js from nodejs.org. Choose the LTS version. After installation, open Command Prompt and run:

node -v

npm -vBoth return version numbers. Good. Now create your project:

mkdir ghost-brain

cd ghost-brain

npm init -y

npm install express socket.io openai dotenv pdf2jsonCreate a .env file in the root folder. Put your OpenAI key there:

OPENAI_API_KEY=your_key_hereCreate a .gitignore file immediately. Add these two lines:

node_modules

.envDo not skip this. More on why in a moment.

Writing the index.js – The Actual Brain Code

Create index.js in your project folder. This is the complete server:

require('dotenv').config();

const express = require('express');

const http = require('http');

const { Server } = require('socket.io');

const { OpenAI } = require('openai');

const PDFParser = require('pdf2json');

const app = express();

const server = http.createServer(app);

const io = new Server(server, { cors: { origin: "*" } });

const openai = new OpenAI({ apiKey: process.env.OPENAI_API_KEY });

async function extractWithAI(text) {

const completion = await openai.chat.completions.create({

model: "gpt-3.5-turbo",

messages: [

{

role: "system",

content: "Extract data into JSON only. Keys: P1_VENDOR, P1_TOTAL. Return raw JSON, no explanation, no markdown."

},

{ role: "user", content: text }

],

});

const rawContent = completion.choices[0].message.content;

return JSON.parse(rawContent);

}

io.on('connection', (socket) => {

console.log('APEX connected!');

socket.on('text_input', async (data) => {

try {

const ghostData = await extractWithAI(data.rawText);

socket.emit('fill_form', ghostData);

} catch (err) {

socket.emit('error', { message: 'Failed to process text' });

}

});

socket.on('pdf_input', async (data) => {

try {

const pdfParser = new PDFParser();

pdfParser.on('pdfParser_dataReady', async (pdfData) => {

const text = pdfData.Pages.map(page =>

page.Texts.map(t =>

decodeURIComponent(t.R.map(r => r.T).join(' '))

).join(' ')

).join('\n');

const ghostData = await extractWithAI(text);

socket.emit('fill_form', ghostData);

});

const pdfBuffer = Buffer.from(data.base64, 'base64');

pdfParser.parseBuffer(pdfBuffer);

} catch (err) {

socket.emit('error', { message: 'Failed to read PDF' });

}

});

});

server.listen(3000, () => {

console.log('Ghost Brain is listening on port 3000');

});The One Bug That Breaks Everything (and the Fix)

The original code I worked from had this line:

const ghostData = JSON.parse(completion.choices.message.content);choices is an array. That line throws a TypeError every single time. Silently. No warning. The form just never fills.

The fix is one character:

const ghostData = JSON.parse(completion.choices[0].message.content);Always index into choices[0]. Always.

How Do I Connect the Ghost Brain to Oracle APEX?

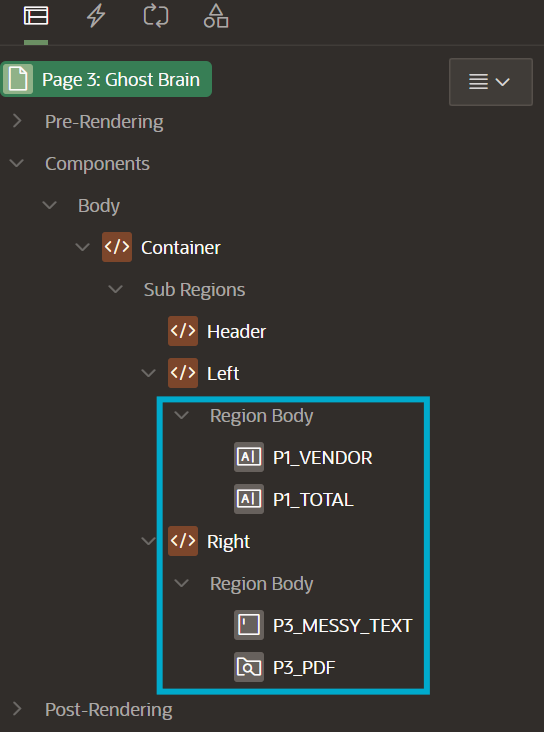

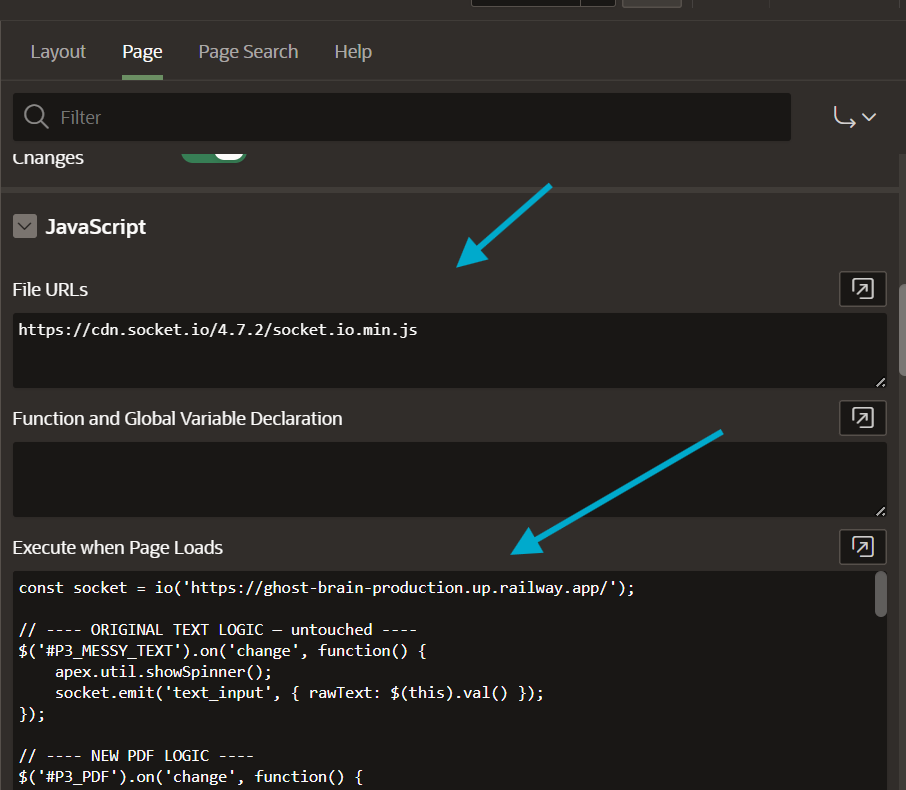

Adding Socket.io to Your APEX Page

Go to your APEX page properties. Under JavaScript → File URLs, paste:

https://cdn.socket.io/4.7.2/socket.io.min.jsBuilding the Form Items That Will Auto-Fill

Create these page items on your APEX page:

| Item Name | Type | Label |

|---|---|---|

P1_MESSY_TEXT | Textarea | Paste Raw Text |

P3_PDF | File Upload | Upload Invoice PDF |

P1_VENDOR | Text Field | Vendor Name |

P1_TOTAL | Text Field | Total Amount |

The item names matter. Whatever names you use in APEX must match the JSON keys you tell the AI to return. If your APEX item is P1_VENDOR_NAME but your prompt says P1_VENDOR, nothing fills.

Define your item names first. Write the AI prompt around them. That is the correct order.

The JavaScript That Ties Everything Together

Paste this in Execute when Page Loads:

const socket = io('https://your-railway-url.up.railway.app');

$('#P1_MESSY_TEXT').on('change', function() {

apex.util.showSpinner();

socket.emit('text_input', { rawText: $(this).val() });

});

$('#P3_PDF').on('change', function() {

const file = this.files[0];

if (!file || file.type !== 'application/pdf') return;

apex.util.showSpinner();

const reader = new FileReader();

reader.onload = function(e) {

const base64 = e.target.result.split(',')[1];

socket.emit('pdf_input', { base64: base64 });

};

reader.readAsDataURL(file);

});

socket.on('fill_form', function(data) {

$('.u-Processing').remove();

Object.keys(data).forEach(function(key) {

$s(key, data[key]);

$('#' + key + '_CONTAINER').css('border', '2px solid #28a745');

});

});

socket.on('error', function(err) {

$('.u-Processing').remove();

apex.message.showErrors([{ type: 'error', message: err.message }]);

});Both text and PDF share the same fill_form handler. One event. Two inputs. The server does the same thing with both.

The Real Story – What Actually Went Wrong When I Built This

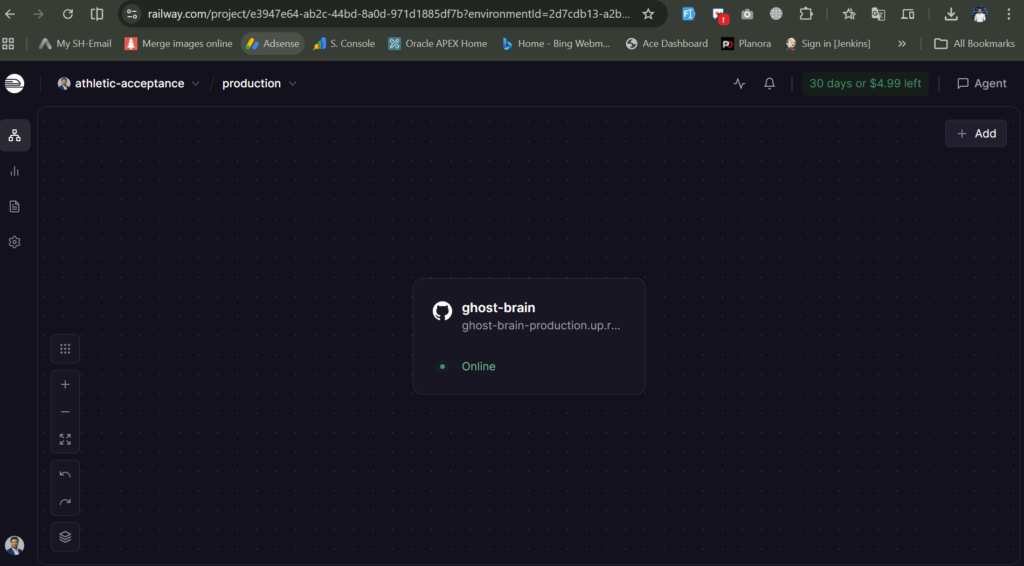

The CORS Wall That Stopped Everything Cold

The first time I wired up APEX and tested, the form just spun. Spinner on. Nothing happens. I opened the browser console and saw this:

Access to fetch at 'http://localhost:3000/socket.io/...' has been blocked

by CORS policy: Permission was denied for this request to access the

loopback address space.The problem is a browser security rule. A website on the public internet cannot talk to localhost on your personal machine. Full stop. No workaround.

The fix is deployment. Your Node.js server needs to be on the public internet, not on your laptop.

I used Railway’s free deployment tier. Push your code to GitHub. Connect the repo to Railway. Railway deploys it and gives you a public URL in about 60 seconds. Replace localhost:3000 in your APEX JavaScript with that URL and the CORS error disappears.

Accidentally Pushing node_modules and the .env File to GitHub

This one hurt.

I pushed my code to GitHub before creating .gitignore. The node_modules folder went up – 516 files changed in one commit. Then I added the .env file to the project and pushed again without checking. My OpenAI API key was now public on GitHub.

The fix for node_modules:

git rm -r --cached node_modules

git add .

git commit -m "remove node_modules"

git push origin mainThe fix for the exposed API key: rotate it immediately at platform.openai.com. Delete the old key. Generate a new one. Update it in Railway’s Variables tab. Railway injects it at runtime so you never need .env on the server at all.

The lesson is simple. Create .gitignore before your first commit. Not after.

What I Learned Deploying to Railway for the First Time

Railway auto-detects Node.js projects and runs npm install on every deploy. You do not push node_modules. You push package.json. Railway handles the rest.

Environment variables go in Railway’s Variables tab, not in your code. Store OPENAI_API_KEY there. Your index.js reads it with process.env.OPENAI_API_KEY. The key never touches GitHub.

Can the Ghost Brain Read PDF Files Too?

Yes. Same server, same logic, one extra handler.

Adding pdf2json to Extract Text From Uploaded Invoices

pdf2json converts a PDF buffer into structured JSON that includes all the text on every page. The server reads that text and passes it to the same extractWithAI function that handles raw text input.

This works on text-based PDFs. Digital invoices, Word documents exported to PDF, and emails saved as PDF all work. Scanned paper documents (photographed images saved as PDF) will not extract text this way. That requires OCR, which is a separate layer.

One Handler, Two Inputs – How Text and PDF Share the Same Logic

The extractWithAI function sits outside both event handlers. Both text_input and pdf_input call it. Both emit fill_form when done. APEX receives the same event either way and fills the same fields.

The user does not know or care how it works. They paste text or upload a file. The form fills.

Where Can You Actually Use AI-Powered Oracle APEX Form Filling in the Real World?

This is not a demo toy. It solves a real data entry problem that exists in almost every industry.

Invoice and vendor management. A clerk pastes a forwarded email from a supplier. The vendor name and total amount extract instantly into the APEX purchasing form. No manual typing. No data entry errors.

Hospital and clinic intake. A patient describes symptoms in plain language at reception. The intake form populates the relevant fields automatically. Staff spend time on the patient, not the keyboard.

HR and recruitment portals. A recruiter pastes a candidate’s CV as raw text. Name, current role, and years of experience extract into the candidate record. Screening happens faster.

Logistics and supply chain. A driver sends a WhatsApp update about a delivery. The dispatcher pastes it. The delivery status form updates in APEX without the dispatcher decoding informal language manually. This pairs naturally with connecting external messaging tools to Oracle APEX for a fully automated pipeline.

Legal and compliance. A user pastes a contract excerpt. Party names, effective dates, and key clause references extract into a structured compliance record.

Client demos. This is the use case that matters most for your business. You open the APEX form. You paste a messy WhatsApp message. The fields fill themselves in two seconds. The client stops thinking about the form and starts thinking about what else you can build them.

Why AI-Powered Form Filling Beats a Standard Dynamic Action Every Time

What Dynamic Actions With PL/SQL Can and Cannot Do

Dynamic Actions in Oracle APEX are powerful. They respond to user events, run PL/SQL processes on the server, update page items, and refresh regions – all without page reloads.

But a Dynamic Action with a PL/SQL process still needs structured input to work with. You can write calling external APIs from within APEX using Oracle Wallet and UTL_HTTP, but that is a round-trip to the database and back. It is synchronous. It adds complexity.

More importantly, common PL/SQL limitations junior developers hit include exactly this problem: PL/SQL does not understand natural language. It never will. It is not designed to.

The Client Demo Moment – When the Form Fills Itself

A standard Dynamic Action demo shows automation. Buttons click. Values update. It is competent.

The Ghost Brain demo shows intelligence. The form reads human language and fills itself. No button. No structure required from the user. Just paste anything.

That is a fundamentally different conversation with a client.

One says “we can automate your process.” The other says “we can make your software understand your people.” If you are building AI-ready Oracle tooling, that second conversation is the one that closes deals.

Three Things to Take Away

First, PL/SQL and AI are not competitors. They work at different layers. PL/SQL handles structured data in the database. AI handles unstructured data at the input layer. Use both.

Second, your local machine is not a server. The CORS error is not a bug. It is a browser security feature. Always deploy your Node.js server to a public host before connecting it to an AI-powered Oracle APEX form filling setup that runs online.

Third, the wow factor is not the technology. It is the moment the user realizes they did not have to type anything. Build toward that moment. Everything else is implementation detail.

Have you tried connecting Node.js to Oracle APEX before? Drop your approach in the comments. I want to see what you have built.

And if you want more content like this, why Oracle APEX remains my platform of choice for exactly these kinds of integrations, this is the right place to keep reading.

Hassan Raza

An Oracle ACE Associate and Senior Oracle Application Developer at S&H Software Solution. I am specialized in Oracle APEX, SQL, and PL/SQL and writes about Oracle development at oraclewithhassan.com